Finite Complement Topology FXx2 Continuous to Discrete Topology

Appendix 1: Upper bound of \(\Vert \mathbf{\bar{A}_{i}}\Vert _2\) for solid elements

Considering that the ith element is solid (\(x_i = 1\)), \(\mathbf{\bar {K}}\) can be separated as follows:

$$\begin{aligned} \mathbf{\bar {K}} = \mathbf{\bar {R}_{i}} + \mathbf {K}_{i}, \end{aligned}$$

(69)

in which \(\mathbf{\bar {R}_{i}}\) is a positive definite matrix. Thus, \(\mathbf{\bar {A}_{\mathbf i}}\) can be written as follows:

$$\begin{aligned} \mathbf{\bar {A}_{\mathbf i}} = \sqrt{\mathbf {K}_{i}} \left[ \mathbf{\bar {R}_{i}} + \mathbf {K}_{i}\right] ^{-1} \sqrt{\mathbf {K}_{i}}. \end{aligned}$$

(70)

Through Woodbury identity, it becomes

$$ \begin{aligned} \mathbf{{{\bar{A}}_{{\mathbf{i}}} }} & = \sqrt {{\mathbf{K}}_{{\mathbf{i}}} } \left[ {\mathbf{{{\bar{R}}_{{\mathbf{i}}} }} ^{{ - 1}} - \mathbf{{{\bar{R}}_{{\mathbf{i}}} }} ^{{ - 1}} {\mkern 1mu} \sqrt {{\mathbf{K}}_{{\mathbf{i}}} } {\mkern 1mu} [{\mkern 1mu} {\mathbf{I}}} \right. \\ &\quad + \sqrt {{\mathbf{K}}_{{\mathbf{i}}} } {\mkern 1mu} \mathbf{{{\bar{R}}_{{\mathbf{i}}} }} ^{{ - 1}} {\mkern 1mu} \sqrt {{\mathbf{K}}_{{\mathbf{i}}} } {\mkern 1mu} ]^{{ - 1}} \left. {\sqrt {{\mathbf{K}}_{{\mathbf{i}}} } {\mkern 1mu} \mathbf{{{\bar{R}}_{i} }} ^{{ - 1}} } \right]\sqrt {{\mathbf{K}}_{{\mathbf{i}}} } . \\ \end{aligned} $$

(71)

Let \(\mathbf{\bar {B}_{i}}\) be defined by

$$\begin{aligned} \mathbf{\bar {B}_{i}} = \sqrt{\mathbf {K_i}} \, \mathbf{\bar {R}_{i}}^{-1} \sqrt{\mathbf {K_i}}, \end{aligned}$$

(72)

since it is symmetric and positive semi-definite, it has an orthogonal eigenvectors matrix \(\mathbf {F}\) and a diagonal eigenvalues matrix \(\mathbf {L}\), composed by non-negative values. Its diagonalization is given by

$$\begin{aligned} \mathbf{\bar {B}_{i}} = \mathbf {F}\,\mathbf {L}\,\mathbf {F}^T. \end{aligned}$$

(73)

So Eq. (71) can be rewritten as follows:

$$\begin{aligned} \mathbf{\bar {A}_{\mathbf i}} {}&= \mathbf {F} \left[ \mathbf {L} - \mathbf {L}\left[ \mathbf {I}+\mathbf {L}\right] ^{-1}\mathbf {L}\right] \mathbf {F}^T \\ {}&= \mathbf {F} \left[ \mathbf {L}\left[ \mathbf {I} - \left[ \mathbf {I}+\mathbf {L}\right] ^{-1}\mathbf {L}\right] \right] \mathbf {F}^T. \end{aligned}$$

(74)

By simplifying it, \(\mathbf {\bar{A}_{i}}\) is obtained as follows:

$$\begin{aligned} \mathbf{\bar {A}_{\mathbf i}} = \mathbf {F} \left[ \mathbf {L}\left[ \mathbf {I}+\mathbf {L}\right] ^{-1}\right] \mathbf {F}^T, \end{aligned}$$

(75)

which means that \(\mathbf {F}\) is also an eigenvectors matrix of \(\mathbf{\bar {A}_{\mathbf i}}\) and \(\mathbf {L}\left[ \mathbf {I}+\mathbf {L}\right] ^{-1}\) is the corresponding eigenvalues matrix. Therefore, each eigenvalue \(\lambda _k\) of \(\mathbf{\bar {A}_{\mathbf i}}\) can be written with respect to its corresponding term of \(\mathbf {L}\):

$$\begin{aligned} \lambda _k = \frac{L_k}{1 + L_k} \in [0,1[ \,. \end{aligned}$$

(76)

This means that, when \(x_i=1\), all eigenvalues of \(\mathbf{\bar {A}_{\mathbf i}}\) must be non-negative and strictly < 1. Thus,

$$\boxed {x_i = 1 \Rightarrow \Vert \mathbf{\bar {A}_{\mathbf i}}\Vert _2 < 1 \,.} $$

(77)

Appendix 2: Counterexample with \(\Vert \mathbf {\bar {A}_{i}}\Vert _2 \ge 1\) for void elements

Considering that the ith element is void (\(x_i = 0\)), \(\mathbf {\bar{K}}\) can be separated as follows:

$$\begin{aligned} \mathbf{\bar{K}} = \mathbf{\bar {R_i}} - \mathbf {K_i}, \end{aligned}$$

(78)

in which \(\mathbf{\bar {R}_{i}}\) is a positive definite matrix. Thus, \(\mathbf{\bar {A}_{\mathbf i}}\) can be written as follows:

$$\begin{aligned} \mathbf{\bar {A}_{\mathbf i}} = \sqrt{\mathbf {K_i}} \left[ \mathbf{\bar {R}_{i}} - \mathbf {K_i}\right] ^{-1} \sqrt{\mathbf {K_i}}. \end{aligned}$$

(79)

Through Woodbury identity, it becomes

$$ \begin{aligned} \mathbf{{{\bar{A}}_{{\mathbf{i}}} }} & = \sqrt {{\mathbf{K}}_{{\mathbf{i}}} } {\mkern 1mu} \left[ {\mathbf{{{\bar{R}}_{{\mathbf{i}}} }} ^{{ - 1}} + \mathbf{{{\bar{R}}_{{\mathbf{i}}} }} ^{{ - 1}} {\mkern 1mu} \sqrt {{\mathbf{K}}_{{\mathbf{i}}} } {\mkern 1mu} [{\mkern 1mu} {\mathbf{I}}} \right. \\ & \quad - \sqrt {{\mathbf{K}}_{{\mathbf{i}}} } {\mkern 1mu} \mathbf{{{\bar{R}}_{{\mathbf{i}}} }} ^{{ - 1}} {\mkern 1mu} \sqrt {{\mathbf{K}}_{{\mathbf{i}}} } {\mkern 1mu} ]^{{ - 1}} \left. {\sqrt {{\mathbf{K}}_{{\mathbf{i}}} } {\mkern 1mu} \mathbf{{{\bar{R}}_{i} }} ^{{ - 1}} } \right]\sqrt {{\mathbf{K}}_{{\mathbf{i}}} } . \\ \end{aligned} $$

(80)

Let \(\mathbf{\bar {B}_{i}}\) be defined by

$$\begin{aligned} \mathbf{\bar {B_i}} = \sqrt{\mathbf {K_i}} \, \mathbf{\bar {R_i}}^{-1} \sqrt{\mathbf {K_i}} , \end{aligned}$$

(81)

since it is symmetric and positive semi-definite, it has an orthogonal eigenvectors matrix \(\mathbf {F}\) and a diagonal eigenvalues matrix \(\mathbf {L}\), composed by non-negative values. Its diagonalization is given by

$$\begin{aligned} \mathbf{\bar {B_i}} = \mathbf {F}\,\mathbf {L}\,\mathbf {F}^T. \end{aligned}$$

(82)

So Eq. (80) can be rewritten as

$$\begin{aligned} \mathbf{\bar {A}_{\mathbf i}} {}&= \mathbf {F} \left[ \mathbf {L} + \mathbf {L}\left[ \mathbf {I}-\mathbf {L}\right] ^{-1}\mathbf {L}\right] \mathbf {F}^T \\ {}&= \mathbf {F} \left[ \mathbf {L}\left[ \mathbf {I} + \left[ \mathbf {I}-\mathbf {L}\right] ^{-1}\mathbf {L}\right] \right] \mathbf {F}^T. \end{aligned}$$

(83)

By simplifying it, \(\mathbf{\bar {A}_{\mathbf i}}\) is obtained as

$$\begin{aligned} \mathbf{\bar {A}_{\mathbf i}} = \mathbf {F} \left[ \mathbf {L}\left[ \mathbf {I}-\mathbf {L}\right] ^{-1}\right] \mathbf {F}^T, \end{aligned}$$

(84)

which means that \(\mathbf {F}\) is also an eigenvectors matrix of \(\mathbf{\bar {A}_{\mathbf i}}\) and \(\mathbf {L}\left[ \mathbf {I}-\mathbf {L}\right] ^{-1}\) is the corresponding eigenvalues matrix. Therefore, each eigenvalue \(\lambda _k\) of \(\mathbf{\bar {A}_{\mathbf i}}\) can be written with respect to its corresponding term of \(\mathbf {L}\):

$$\begin{aligned} \lambda _k = \frac{L_k}{1 - L_k}. \end{aligned}$$

(85)

Since \(\mathbf{\bar {A}_{\mathbf i}}\) is a positive semi-definite matrix with finite eigenvalues, Eq. (85) establishes an upper bound for \(L_k\): \(L_k \in [0,1[\). As for \(\lambda _k\), it will only be lesser than 1 when \(L_k\) is lesser than 1/2:

$$ \begin{aligned} & L_{k} \in {\text{ }}\left[ {0,\frac{1}{2}} \right[ \Rightarrow \lambda _{k} \in [0,1[; \\ & L_{k} \in \left[ {\frac{1}{2},1} \right[ \Rightarrow \lambda _{k} \in [1,\infty [. \\ \end{aligned} $$

(86)

Thus,

$$\boxed { \begin{aligned}& x_i = 0 \text { and } \Vert \mathbf{\bar {B}_{\mathbf{i}}}\Vert _2 <\frac{1}{2} \Rightarrow \Vert \mathbf{\bar {A}_{\mathbf{i}}}\Vert _2 < 1 \,,\\& x_i = 0 \text { and } \Vert \mathbf{\bar {B}_{\mathbf{i}}}\Vert _2 \ge \frac{1}{2} \Rightarrow \Vert \mathbf{\bar {A}_{\mathbf{i}}}\Vert _2 \ge 1 . \end{aligned}} $$

(87)

It will be shown with a counterexample that \(\Vert \mathbf{\bar {A}_{\mathbf i}}\Vert _2\) may indeed assume values \(> 1\) when \(x_i=0\).

The value of \(\Vert \mathbf{\bar {A}_{\mathbf i}}\Vert _2\) highly depends on the current topology and on the soft-kill parameter \(\varepsilon _{\text {k}}\). The presence of nodes connected only to void elements, especially for a small \(\varepsilon _{\text {k}}\), favors higher values of \(\Vert \mathbf{\bar {A}_{\mathbf i}}\Vert _2\).

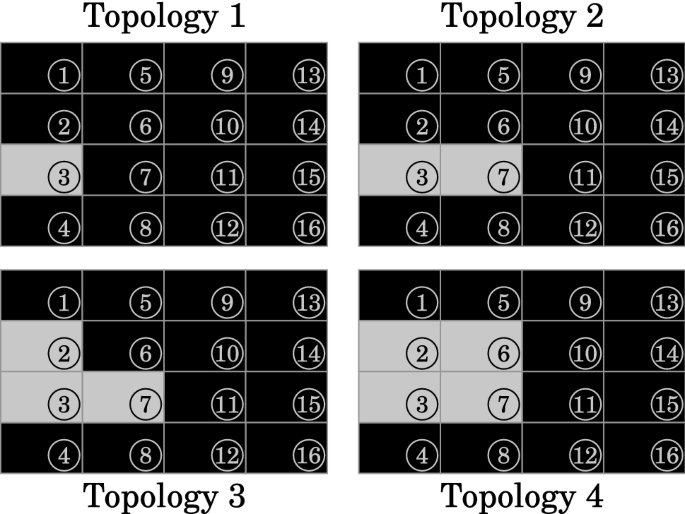

In Fig. 26, four topologies are presented for a cantilever beam of dimensions 80 \(\times \) 50 mm, clamped on its left end. Solid elements are represented in black and void elements are represented in gray, they are numbered from top to bottom, starting at the leftmost column. Four-node bilinear quadrilateral elements of dimensions 20.0 \(\times \) 12.5 mm were considered, in plane stress state. The material is homogeneous and isotropic, with a Young's modulus of 210 GPa and a Poisson's ratio of 0.3.

Different topologies for a \(4 \times 4\) mesh

For \(\varepsilon _{\text {k}} = 0.1\), Table 4 presents the values of \(\Vert \mathbf{\bar {A}_{\mathbf{i}}}\Vert _2\) and \(\Vert \mathbf{\bar {B}_{\mathbf{i}}}\Vert _2\) for each void element in these topologies. It can be seen that Eq. (85) is satisfied and that \(\Vert \mathbf{\bar {A}_{\mathbf{i}}}\Vert _2\) is \(< 1\) when \(\Vert \mathbf{\bar {B}_{\mathbf{i}}}\Vert _2\) is less than 1/2.

Even for such unusually high \(\varepsilon _{\text {k}}\), except for the void element in Topology 1, all values of \(\Vert \mathbf{\bar {A}_{\mathbf{i}}}\Vert _2\) were > 1. This suggests that, in a practical case, \(\Vert \mathbf{\bar {A}_{\mathbf{i}}}\Vert _2\) will hardly be \(< 1\) for any void element.

Appendix 3: Selective inverse update

Considering that the selective inverse of \(\mathbf{\bar {K}}\) is known, it is desired to obtain its new value after a variation \({{\varvec{\Delta}} {\mathbf{K}}}\). The matrix \(\mathbf {I_\Delta }\) matches the identity matrix for entries corresponding to valued terms of \({{\varvec{\Delta}} {\mathbf{K}}}\), and it is zero-valued elsewhere. The updated complete inverse can be written through Woodbury identity as follows:

$$\begin{aligned} \left[ \mathbf{\bar {K}} + {{\varvec{\Delta}} {\mathbf{K}}}\right] ^{-1} & = \mathbf{\bar {K}}^{-1} - \mathbf{\bar {K}}^{-1}\,\mathbf {I_\Delta }\,{{\varvec{\Delta}} {\mathbf{K}}} \Big [\,\mathbf {I} \\ {}&\quad + \mathbf {I_\Delta }\,\mathbf{\bar {K}}^{-1}\,\mathbf {I_\Delta }\,{{\varvec{\Delta}} {\mathbf{K}}}\Big ]^{-1} \mathbf {I_\Delta }\,\mathbf{\bar {K}}^{-1} \,. \end{aligned} $$

(88)

By defining \(\mathbf {Q}\) and \(\mathbf {T}\) as

$$\begin{aligned} \mathbf {Q} = {{\varvec{\Delta}} {\mathbf{K}}} \left[ \mathbf {I} + \mathbf {I_\Delta }\,\mathbf{\bar {K}}^{-1}\,\mathbf {I_\Delta }\,{{\varvec{\Delta}} {\mathbf{K}}}\right] ^{-1} \mathbf {I_\Delta } \end{aligned}$$

(89)

and

$$\begin{aligned} \mathbf {T} = \mathbf {I_\Delta }\,\mathbf{\bar {K}}^{-1}, \end{aligned}$$

(90)

the updated complete inverse can be written as shown below:

$$\begin{aligned} \left[ \mathbf{\bar {K}} + {{\varvec{\Delta}} {\mathbf{K}}}\right] ^{-1} = \mathbf{\bar {K}}^{-1} -\mathbf {T}^T\,\mathbf {Q}\,\mathbf {T}. \end{aligned}$$

(91)

Let \(g_{\Delta }\) be the dimension of the valued part of \({{\varvec{\Delta}} {\mathbf{K}}}\); and let G be the dimension of the whole matrix. After each alteration, \(g_{\Delta }\) linear systems of dimension G must be solved to compute \(\mathbf {T}\), and a matrix of dimension \(g_{\Delta }\) must be inverted to compute \(\mathbf {Q}\).

All columns \(\mathbf {t_i}\) of \(\mathbf {T}\) have only \(g_{\Delta }\) valued terms. The dimension of the valued submatrix of \(\mathbf {Q}\) is also \(g_{\Delta }\). Therefore, after obtaining \(\mathbf {T}\) and \(\mathbf {Q}\), each updated entry, given by

$$\boxed {\left[ \mathbf{\bar {K}} + {{\varvec{\Delta}} {\mathbf{K}}}\right] ^{-1}_{i_1 i_2} = \mathbf{\bar {K}}^{-1}_{i_1 i_2} -\mathbf {t_{i_1}}^T\,\mathbf {Q}\,\mathbf {t_{i_2}} ,} $$

(92)

can be obtained by computing a bilinear expression of dimension \(g_{\Delta }\).

Even for a small topological variation, which means a small \(g_{\Delta }\), this update procedure would still be costly, since it involves solving linear systems of dimension G. Nevertheless, it should be advantageous over obtaining the selective inverse from scratch in each iteration of the topology optimization algorithm.

Appendix 4: Explicit expressions for the CGS approach

Appendix 4.1: Definitions and notation

For the ith element, let the matrix \(\mathbf{\bar{\bar {K}}}\) be defined as

$$ \mathbf{\bar{\bar {K}}} = \mathbf{\bar {K}} + {{\varvec{\Delta}} {\mathbf{K}}}, $$

(93)

where \({{\varvec{\Delta}} {\mathbf{K}}}\) is given by

$$ {\mathbf{\Delta K}} = \left\{ {\begin{array}{*{20}l} {{\mathbf{K}}_{{\mathbf{i}}} ,} \hfill & {{\text{if}}\;x_{i} = 0,} \hfill \\ { - {\mathbf{K}}_{{\mathbf{i}}} ,} \hfill & {{\text{if}}\;x_{i} = 1.} \hfill \\ \end{array} } \right. $$

(94)

Let \(\bar{C}\), \(C_i\), \(C_{\Delta }\) and \(C_T\) be the scalars defined as follows:

$$\begin{aligned} \bar{C} = \frac{1}{2}\,\mathbf{\bar {u}}^T \, \mathbf {f}, \end{aligned}$$

(95)

$$\begin{aligned} C_i = \frac{1}{2}\,\mathbf{\bar {u}}^T \, \mathbf {K_i} \, \mathbf{\bar {u}}, \end{aligned}$$

(96)

$$\begin{aligned} C_{\Delta } = \frac{1}{2}\,\mathbf{\bar {u}}^T \, {{\varvec{\Delta}} {\mathbf{K}}} \, \mathbf{\bar {u}} \,, \end{aligned}$$

(97)

and

$$\begin{aligned} C_{T} = \bar{C} + C_{\Delta }. \end{aligned}$$

(98)

For a given preconditioner matrix \(\mathbf {M}\), let the matrices \(\mathbf {W_j}\) be defined as follows:

$$\begin{aligned} \mathbf {W_j} = \mathbf {M}^{-1} \left[ \mathbf{\bar{\bar{K}}}\,\mathbf {M}^{-1}\right] ^{j}. \end{aligned}$$

(99)

The following notation will be used in this appendix to represent inner products in a more compact way:

$$\begin{aligned} \left\langle \mathbf {a_1},\, \mathbf {a_2} \right\rangle _{j} = \left\langle \mathbf {a_1},\, \mathbf {a_2} \right\rangle _{\mathbf {W_j}} = \mathbf {a_1}^T\,\mathbf {W_j}\,\mathbf {a_2}; \end{aligned}$$

(100)

$$\begin{aligned} \left\langle \mathbf {a} \right\rangle _{j} = \left\langle \mathbf {a} \right\rangle _{\mathbf {W_j}} = \Vert \mathbf {a}\Vert _{\mathbf {W_j}}^2 = \mathbf {a}^T\,\mathbf {W_j}\,\mathbf {a}. \end{aligned}$$

(101)

Three initial conditions were considered: in the first case, \(\mathbf {u_0} = \mathbf {0}\) and \(\mathbf {d_0} = \mathbf {M}^{-1}\,\mathbf {f}\) (direction of steepest descent); in the second case, \(\mathbf {u_0} = \mathbf{\bar {u}}\) and \(\mathbf {d_0} = -\mathbf {M}^{-1}\,{{\varvec{\Delta}} {\mathbf{K}}}\,\mathbf{\bar {u}}\) (direction of steepest descent); and in the third case, \(\mathbf {u_0} = \mathbf {0}\) and \(\mathbf {d_0} = \mathbf{\bar {u}}\).

Appendix 4.2: Explicit expressions for the 1st case

Considering \(\mathbf {u_0} = \mathbf {0}\) and \(\mathbf {d_0} = \mathbf {M}^{-1}\,\mathbf {f}\), the first step of the CGM results in the displacements vector

$$\begin{aligned} \mathbf {u_1} = \left[ \frac{\langle \mathbf {f} \rangle _0}{\langle \mathbf {f} \rangle _1}\right] \mathbf {W_0}\,\mathbf {f}. \end{aligned}$$

(102)

The coefficients \(\langle \mathbf {f} \rangle _0\) and \(\langle \mathbf {f} \rangle _1\) are given by

$$\begin{aligned} \langle \mathbf {f} \rangle _0 = \mathbf {f}^T\,\mathbf {v_M} \end{aligned}$$

(103)

and

$$\begin{aligned} \langle \mathbf {f} \rangle _1 = \mathbf {v_{K}}^T \, \mathbf {v_M}, \end{aligned}$$

(104)

where the vectors \(\mathbf {v_M}\) and \(\mathbf {v_K}\) correspond to

$$\begin{aligned} \mathbf {v_M} = \mathbf {M}^{-1}\,\mathbf {f} \end{aligned}$$

(105)

and

$$\begin{aligned} \mathbf {v_K} = \mathbf{\bar {K}}\,\mathbf {v_M} + {{\varvec{\Delta}} {\mathbf{K}}}\,\mathbf {v_M}. \end{aligned}$$

(106)

From Eq. (58), the sensitivity expression is obtained as follows:

$$\boxed{\alpha _{i}^{{\langle 1\rangle }} = \left\{ {\begin{array}{*{20}l} { - \left[ {\bar{C} - \frac{{\langle {\mathbf{f}}\rangle _{0} ^{2} }}{{2{\mkern 1mu} \langle {\mathbf{f}}\rangle _{1} }}} \right],} \hfill & {{\text{if }}x_{i} = 0,} \hfill \\ { - \left[ {\frac{{\langle {\mathbf{f}}\rangle _{0} ^{2} }}{{2{\mkern 1mu} \langle {\mathbf{f}}\rangle _{1} }} - \bar{C}} \right],} \hfill & {{\text{if }}x_{i} = 1.} \hfill \\ \end{array} } \right.} $$

(107)

For a preconditioner matrix \(\mathbf {M} = \mathbf{\bar {K}}\), \(\mathbf {v_M} = \mathbf{\bar {u}}\) and the sensitivity expression becomes

$$\boxed {\alpha ^{\langle 1 \rangle }_i = - \left[ \frac{\bar{C}}{C_{T}}\right] C_i,\quad x_i \in \{0,1\}.}$$

(108)

The second step of the CGM results in the displacements vector:

$$\begin{aligned} \mathbf {u_2} & = \left[ \frac{\langle \mathbf {f} \rangle _0 \, \langle \mathbf {f} \rangle _3 - \langle \mathbf {f} \rangle _1 \, \langle \mathbf {f} \rangle _2}{\langle \mathbf {f} \rangle _1 \, \langle \mathbf {f} \rangle _3 - \langle \mathbf {f} \rangle _2 \, \langle \mathbf {f} \rangle _2}\right] \mathbf {W_0}\,\mathbf {f} \\ & \quad + \left[ \frac{\langle \mathbf {f} \rangle _1 \, \langle \mathbf {f} \rangle _1 - \langle \mathbf {f} \rangle _0 \, \langle \mathbf {f} \rangle _2}{\langle \mathbf {f} \rangle _1 \, \langle \mathbf {f} \rangle _3 - \langle \mathbf {f} \rangle _2 \, \langle \mathbf {f} \rangle _2}\right] \mathbf {W_1}\,\mathbf {f}. \end{aligned} $$

(109)

The coefficients \(\langle \mathbf {f} \rangle _2\) and \(\langle \mathbf {f} \rangle _3\) are given by

$$\begin{aligned} \langle \mathbf {f} \rangle _2 = \mathbf {v_K}^T \, \mathbf {v_L} \end{aligned}$$

(110)

and

$$\begin{aligned} \langle \mathbf {f} \rangle _3 = \mathbf {v_R}^T \, \mathbf {v_L} \,, \end{aligned}$$

(111)

where the vectors \(\mathbf {v_L}\) and \(\mathbf {v_R}\) correspond to

$$\begin{aligned} \mathbf {v_L} = \mathbf {M}^{-1}\,\mathbf {v_K} \end{aligned}$$

(112)

and

$$\begin{aligned} \mathbf {v_R} = \mathbf{\bar {K}}\,\mathbf {v_L} + {{\varvec{\Delta}} {\mathbf{K}}}\,\mathbf {v_L}. \end{aligned}$$

(113)

From Eq. (58), the sensitivity expression is obtained as follows:

$$\boxed { \alpha _{i}^{{\langle 1\rangle }} = \left\{ {\begin{array}{*{20}l} { - \left[ {\bar{C} - \frac{{\langle {\mathbf{f}}\rangle _{0} ^{2} {\mkern 1mu} \langle {\mathbf{f}}\rangle _{3} - 2{\mkern 1mu} \langle {\mathbf{f}}\rangle _{0} {\mkern 1mu} \langle {\mathbf{f}}\rangle _{1} {\mkern 1mu} \langle {\mathbf{f}}\rangle _{2} + \langle {\mathbf{f}}\rangle _{1} ^{3} }}{{2{\mkern 1mu} [\langle {\mathbf{f}}\rangle _{1} {\mkern 1mu} \langle {\mathbf{f}}\rangle _{3} - \langle {\mathbf{f}}\rangle _{2} ^{2} ]}}} \right]} \hfill \\ {{\text{if}}\;x_{i} = 0,} \hfill \\ { - \left[ {\frac{{\langle {\mathbf{f}}\rangle _{0} ^{2} {\mkern 1mu} \langle {\mathbf{f}}\rangle _{3} - 2{\mkern 1mu} \langle {\mathbf{f}}\rangle _{0} {\mkern 1mu} \langle {\mathbf{f}}\rangle _{1} {\mkern 1mu} \langle {\mathbf{f}}\rangle _{2} + \langle {\mathbf{f}}\rangle _{1} ^{3} }}{{2{\mkern 1mu} [\langle {\mathbf{f}}\rangle _{1} {\mkern 1mu} \langle {\mathbf{f}}\rangle _{3} - \langle {\mathbf{f}}\rangle _{2} ^{2} ]}} - \bar{C}} \right]} \hfill \\ {{\text{if}}\;x_{i} = 1.} \hfill \\ \end{array} } \right. } $$

(114)

For this and all the following sensitivity expressions, the selective inverse of \(\mathbf{\bar {K}}\) would be needed to use \(\mathbf {M} = \mathbf{\bar {K}}\). To reduce computational costs, a diagonal \(\mathbf {M}\) is recommended (Jacobi preconditioning).

Appendix 4.3: Explicit expressions for the 2nd case

Considering \(\mathbf {u_0} = \mathbf{\bar {u}}\) and \(\mathbf {d_0} = -\mathbf {M}^{-1}\,{{\varvec{\Delta}} {\mathbf{K}}}\,\mathbf{\bar {u}}\), the first step of the CGM results in the displacements vector

$$\begin{aligned} \mathbf {u_1} = \mathbf{\bar {u}} + \left[ \frac{\langle \mathbf {b} \rangle _0}{\langle \mathbf {b} \rangle _1}\right] \mathbf {W_0}\,\mathbf {b}, \end{aligned}$$

(115)

where the vector \(\mathbf {b}\) is corresponds to

$$ {\mathbf{b}} = - {\mathbf{\Delta K}}{\mkern 1mu} \mathbf{{\bar{u}}} = \left\{ {\begin{array}{*{20}l} { - {\mathbf{K}}_{{\mathbf{i}}} {\mkern 1mu} \mathbf{{\bar{u}}} ,} \hfill & {{\text{if}}\;x_{i} = 0,} \hfill \\ {{\mathbf{K}}_{{\mathbf{i}}} {\mkern 1mu} {\mathbf{\bar{u}}}, } \hfill & {{\text{if}}\;x_{i} = 1.} \hfill \\ \end{array} } \right. $$

(116)

The coefficients \(\langle \mathbf {b} \rangle _0\) and \(\langle \mathbf {b} \rangle _1\) are given by

$$\begin{aligned} \langle \mathbf {b} \rangle _0 = \mathbf {b}^T\,\mathbf {v_M} \end{aligned}$$

(117)

and

$$\begin{aligned} \langle \mathbf {b} \rangle _1 = \mathbf {v_{K}}^T \, \mathbf {v_M}, \end{aligned}$$

(118)

where the vectors \(\mathbf {v_M}\) and \(\mathbf {v_K}\) were redefined as follows:

$$\begin{aligned} \mathbf {v_M} = \mathbf {M}^{-1}\,\mathbf {b} \end{aligned}$$

(119)

and

$$\begin{aligned} \mathbf {v_K} = \mathbf{\bar {K}}\,\mathbf {v_M} + {{\varvec{\Delta}} {\mathbf{K}}}\,\mathbf {v_M}. \end{aligned}$$

(120)

From Eq. (59), the sensitivity expression is obtained as follows:

$$\boxed{ \alpha _{i}^{{\langle 1\rangle }} = \left\{ {\begin{array}{*{20}l} { - \left[ {C_{i} - \frac{{\langle {\mathbf{b}}\rangle _{0} ^{2} }}{{2{\mkern 1mu} \langle {\mathbf{b}}\rangle _{1} }}} \right],} \hfill & {{\text{if}}\;x_{i} = 0,} \hfill \\ { - \left[ {C_{i} + \frac{{\langle {\mathbf{b}}\rangle _{0} ^{2} }}{{2{\mkern 1mu} \langle {\mathbf{b}}\rangle _{1} }}} \right],} \hfill & {{\text{if}}\;x_{i} = 1.} \hfill \\ \end{array} } \right. }$$

(121)

The second step of the CGM results in the displacements vector:

$$\begin{aligned} \mathbf {u_2} & = \mathbf{\bar {u}} + \left[ \frac{\langle \mathbf {b} \rangle _0 \, \langle \mathbf {b} \rangle _3 - \langle \mathbf {b} \rangle _1 \, \langle \mathbf {b} \rangle _2}{\langle \mathbf {b} \rangle _1 \, \langle \mathbf {b} \rangle _3 - \langle \mathbf {b} \rangle _2 \, \langle \mathbf {b} \rangle _2}\right] \mathbf {W_0}\,\mathbf {b} \\ &\quad + \left[ \frac{\langle \mathbf {b} \rangle _1 \, \langle \mathbf {b} \rangle _1 - \langle \mathbf {b} \rangle _0 \, \langle \mathbf {b} \rangle _2}{\langle \mathbf {b} \rangle _1 \, \langle \mathbf {b} \rangle _3 - \langle \mathbf {b} \rangle _2 \, \langle \mathbf {b} \rangle _2}\right] \mathbf {W_1}\,\mathbf {b}. \end{aligned} $$

(122)

The coefficients \(\langle \mathbf {b} \rangle _2\) and \(\langle \mathbf {b} \rangle _3\) are given by

$$\begin{aligned} \langle \mathbf {b} \rangle _2 = \mathbf {v_K}^T \, \mathbf {v_L} \end{aligned}$$

(123)

and

$$\begin{aligned} \langle \mathbf {b} \rangle _3 = \mathbf {v_R}^T \, \mathbf {v_L}, \end{aligned}$$

(124)

where the vectors \(\mathbf {v_L}\) and \(\mathbf {v_R}\) were redefined as follows:

$$\begin{aligned} \mathbf {v_L} = \mathbf {M}^{-1}\,\mathbf {v_K} \end{aligned}$$

(125)

and

$$\begin{aligned} \mathbf {v_R} = \mathbf{\bar {K}}\,\mathbf {v_L} + {{\varvec{\Delta}} {\mathbf{K}}}\,\mathbf {v_L}. \end{aligned}$$

(126)

From Eq. (59), the sensitivity expression is obtained as follows:

$$\boxed { \alpha _{i}^{{\langle 1\rangle }} = \left\{ {\begin{array}{*{20}l} { - \left[ {C_{i} - \frac{{\langle {\mathbf{b}}\rangle _{0} ^{2} {\mkern 1mu} \langle {\mathbf{b}}\rangle _{3} - 2{\mkern 1mu} \langle {\mathbf{b}}\rangle _{0} {\mkern 1mu} \langle {\mathbf{b}}\rangle _{1} {\mkern 1mu} \langle {\mathbf{b}}\rangle _{2} + \langle {\mathbf{b}}\rangle _{1} ^{3} }}{{2{\mkern 1mu} [\langle {\mathbf{b}}\rangle _{1} {\mkern 1mu} \langle {\mathbf{b}}\rangle _{3} - \langle {\mathbf{b}}\rangle _{2} ^{2} ]}}} \right]} \hfill \\ {{\text{if}}\;x_{i} = 0,} \hfill \\ { - \left[ {C_{i} + \frac{{\langle {\mathbf{b}}\rangle _{0} ^{2} {\mkern 1mu} \langle {\mathbf{b}}\rangle _{3} - 2{\mkern 1mu} \langle {\mathbf{b}}\rangle _{0} {\mkern 1mu} \langle {\mathbf{b}}\rangle _{1} {\mkern 1mu} \langle {\mathbf{b}}\rangle _{2} + \langle {\mathbf{b}}\rangle _{1} ^{3} }}{{2{\mkern 1mu} [\langle {\mathbf{b}}\rangle _{1} {\mkern 1mu} \langle {\mathbf{b}}\rangle _{3} - \langle {\mathbf{b}}\rangle _{2} ^{2} ]}}} \right]} \hfill \\ {{\text{if}}\;x_{i} = 1.} \hfill \\ \end{array} } \right. } $$

(127)

Appendix 4.4: Explicit expressions for the 3rd case

Considering \(\mathbf {u_0} = \mathbf {0}\) and \(\mathbf {d_0} = \mathbf{\bar {u}}\), the first step of the CGM results in the displacements vector:

$$\begin{aligned} \mathbf {u_1} = \frac{\bar{C}}{C_{T}}\,\mathbf{\bar {u}}. \end{aligned}$$

(128)

Both Eqs. (58) and (59) produce the same sensitivity expression, given by

$$\boxed {\alpha ^{\langle 1 \rangle }_i = - \left[ \frac{\bar{C}}{C_{T}}\right] C_i, \quad x_i \in \{0,1\} ,}$$

(129)

which is the same as Eq. (108).

The second step of the CGM results in the displacements vector:

$$\begin{aligned} \mathbf {u_2} &= \left[ \frac{\bar{C}\,{\langle \mathbf {z},\, \mathbf {g} \rangle _0}^2 - 2\,\bar{C}\,C_T\,\langle \mathbf {g} \rangle _1 - C_T\,\langle \mathbf {g} \rangle _0\,\langle \mathbf {z},\, \mathbf {g}\rangle _0}{C_T\,{\langle \mathbf {z},\, \mathbf {g} \rangle _0}^2 - 2\,{C_T}^2\,\langle \mathbf {g} \rangle _1}\right] \mathbf{\bar {u}} \\ {}&\quad +\left[ \frac{2\,{C_T}^2\,\langle \mathbf {g} \rangle _0}{C_T\,{\langle \mathbf {z},\, \mathbf {g} \rangle _0}^2 - 2\,{C_T}^2\,\langle \mathbf {g} \rangle _1}\right] \mathbf {W_0}\,\mathbf {g}, \end{aligned} $$

(130)

where the vectors \(\mathbf {z}\) and \(\mathbf {g}\) correspond to

$$\begin{aligned} \mathbf {z} = \mathbf {f} - \mathbf {b} \end{aligned}$$

(131)

and

$$\begin{aligned} \mathbf {g} = \frac{\bar{C}}{C_T}\,\mathbf {z} -\mathbf {f}. \end{aligned}$$

(132)

The coefficients \(\langle \mathbf {z},\, \mathbf {g} \rangle _0\), \(\langle \mathbf {g} \rangle _0\) and \(\langle \mathbf {g} \rangle _1\) are given by

$$\begin{aligned} \langle \mathbf {z},\, \mathbf {g} \rangle _0 = \mathbf {v_M}^T \, \mathbf {z}, \end{aligned}$$

(133)

$$\begin{aligned} \langle \mathbf {g} \rangle _0 = \mathbf {v_M}^T \, \mathbf {g} \end{aligned}$$

(134)

and

$$\begin{aligned} \langle \mathbf {g} \rangle _1 = \mathbf {v_M}^T \, \mathbf {v_K}, \end{aligned}$$

(135)

where the vectors \(\mathbf {v_M}\) and \(\mathbf {v_K}\) were redefined as follows:

$$\begin{aligned} \mathbf {v_M} = \mathbf {M}^{-1}\,\mathbf {g} \end{aligned}$$

(136)

and

$$\begin{aligned} \mathbf {v_K} = \mathbf{\bar {K}}\,\mathbf {v_M} + {{\varvec{\Delta}} {\mathbf{K}}}\,\mathbf {v_M}. \end{aligned}$$

(137)

Both Eqs. (58) and (59) produce the same sensitivity expression, given by

$$\boxed { \alpha _{i}^{{\langle 1\rangle }} = \left\{ {\begin{array}{*{20}l} { - \left[ {\frac{{\bar{C}}}{{C_{T} }}} \right]C_{i} + \left[ {\frac{{C_{T} {\mkern 1mu} \langle {\mathbf{g}}\rangle _{0} ^{2} }}{{2{\mkern 1mu} C_{T} {\mkern 1mu} \langle {\mathbf{g}}\rangle _{1} - \langle {\mathbf{z}},\;{\mathbf{g}}\rangle _{0} ^{2} }}} \right]} \hfill \\ {{\text{if}}\;x_{i} = 0,} \hfill \\ { - \left[ {\frac{{\bar{C}}}{{C_{T} }}} \right]C_{i} - \left[ {\frac{{C_{T} {\mkern 1mu} \langle {\mathbf{g}}\rangle _{0} ^{2} }}{{2{\mkern 1mu} C_{T} {\mkern 1mu} \langle {\mathbf{g}}\rangle _{1} - \langle {\mathbf{z}},\;{\mathbf{g}}\rangle _{0} ^{2} }}} \right]} \hfill \\ {{\text{if}}\;x_{i} = 1.} \hfill \\ \end{array} } \right. }$$

(138)

Appendix 5: Upper bounds of \(\Vert \mathbf {\bar{A}_{i}}\Vert _2\) for void elements and of sensitivity error values

In order to obtain the element-wise sensitivity error for FOCI and HOCI expressions, the qth-order truncated series of Eq. (40) is subtracted from the exact WS expression, from Eq. (43), then, the absolute value of the result is taken:

$$\begin{aligned} {\varepsilon _{\alpha } = \left| -\frac{1}{2}\,\mathbf{\bar {w_i}}^T\left[ \left[ \mathbf {I} \pm \mathbf{ \bar{\varvec{\Lambda }}_{\mathbf{i}}}\right] ^{-1} - \sum \limits _{a=1}^{q}\left[ \mp \mathbf{\bar {\varvec{\Lambda }}_{\mathbf{i}}}\right] ^{a-1} \right] \mathbf{\bar {w}_{\mathbf{i}}}\right|,} \end{aligned}$$

(139)

where the signs \(\pm \, {\text {and}} \,\mp\) are used to perform the demonstration simultaneously for void and solid elements.

Since these are diagonal matrices, the expression can be written in terms of the diagonal values \(\lambda _k\) and their corresponding term in \(\mathbf{\bar {w}_{i}}\) that will be referred to as \(w_k\):

$$\begin{aligned} {\varepsilon _{\alpha } = \left| -\frac{1}{2}\,\sum \limits _{k=1}^{G} \left[ \frac{1}{1 \pm \lambda _k} - \sum \limits _{a=1}^{q} [\mp \lambda _k]^{a-1} \right] w_k^2\right| .} \end{aligned}$$

(140)

It should be noted that, although the index k goes up to G in the summation, \(\mathbf{\bar {A}_{\mathbf i}}\) has at most \(g_i\) nonzero eigenvalues and \(\mathbf{\bar {w}_{i}}\) has at most \(g_i\) nonzero entries. Thus, if local matrices and vectors were defined for the ith element, G could be replaced by \(g_i\).

Equation (140) can be simplified as follows:

$${\begin{aligned} \varepsilon _{\alpha } &= \frac{1}{2} \left| \sum \limits _{k=1}^{G}\left[ \frac{1-[1+[\pm \lambda _k][\mp \lambda _k]^{q-1}]}{1\pm \lambda _k}\right] w_k^2\right| \\& = \frac{1}{2} \left| \sum \limits _{k=1}^{G}\left[ \frac{\mp \lambda _k[\mp \lambda _k]^{q-1}]}{1\pm \lambda _k}\right] w_k^2\right| . \end{aligned}} $$

(141)

Since \(\lambda _k<1\) for solid elements, the denominator is always positive. Therefore, \(\varepsilon _{\alpha }\) can be expressed as follows:

$$\begin{aligned} {\varepsilon _{\alpha } = \frac{1}{2} \sum \limits _{k=1}^{G}\left[ \frac{\lambda _k^{q}}{1\pm \lambda _k}\right] w_k^2 .} \end{aligned}$$

(142)

For both void and solid elements, the term between brackets increases monotonically with \(\lambda _k\), therefore, the following upper bound can be defined:

$$\begin{aligned} {\varepsilon _{\alpha } \le \frac{1}{2}\left[ \frac{\max (\mathbf{\bar {\varvec{\Lambda }}_{\mathbf{i}}})^{q}}{1 \pm \max (\mathbf{\bar {\varvec{\Lambda }}_{\mathbf{i}}})}\right] \sum \limits _{k=1}^{G} w_k^2 .} \end{aligned}$$

(143)

Since \(\max (\mathbf{\bar {\varvec{\Lambda }}_{\mathbf{i}}}) = \Vert \mathbf{\bar {A}_{\mathbf{i}}}\Vert _2\) and \(\mathbf{\bar {w_i}}^T\,\mathbf{\bar {w_i}} = \mathbf{\bar {u}}^T\,\mathbf {K_i}\,\mathbf{\bar {u}}\), the upper bound can be rewritten as follows:

$${\boxed {\varepsilon _{\alpha } \le \left[ \frac{1}{2}\,\mathbf{\bar {u}}^T\,\mathbf {K_i}\,\mathbf{\bar {u}}\right] \left[ \frac{{\Vert \mathbf{\bar {A}_{\mathbf{i}}}\Vert _2}^{q}}{1 \pm \Vert \mathbf{\bar {A}_{\mathbf{i}}}\Vert _2}\right] .}} $$

(144)

To obtain an upper bound for \(\Vert \mathbf{\bar {A}_{\mathbf i}}\Vert _2\), the submultiplicative property of the considered norm is used:

$$\begin{aligned} {\Vert \mathbf{\bar {A}_{\mathbf i}}\Vert _2 = \Vert \sqrt{\mathbf {K_i}}\,\mathbf{\bar {K}}^{-1} \sqrt{\mathbf {K_i}}\Vert _2 \le \Vert \sqrt{\mathbf {K_i}}\Vert _2\ \, \Vert \mathbf{\bar {K}}^{-1}\Vert _2 \, \Vert \sqrt{\mathbf {K_i}}\Vert _2\ .} \end{aligned}$$

(145)

This upper bound can be rewritten as follows:

$$\begin{aligned} {\Vert \mathbf{\bar {A}_{\mathbf i}}\Vert _2 \le \Vert \mathbf {K_i}\Vert _2\,\Vert \mathbf{\bar {K}}^{-1}\Vert _2 = \frac{\Vert \mathbf {K_i}\Vert _2}{\Vert \mathbf{\bar {K}}\Vert _2}\,\kappa (\mathbf{\bar {K}}),} \end{aligned}$$

(146)

where \(\kappa (\mathbf{\bar{K}})\) denotes the condition number of \(\mathbf{\bar {K}}\), which is defined as \(\kappa (\mathbf{\bar {K}}) = \Vert \mathbf{\bar {K}}\Vert _2\,\Vert \mathbf{\bar {K}}^{-1}\Vert _2\).

In Fried (1973), it was shown that the largest eigenvalue of \(\mathbf{\bar {K}}\) is greater than or equal to the largest eigenvalue of each elemental matrix that composes it. This means that \(\Vert \mathbf{\bar {K}}\Vert _2 \ge \Vert \varepsilon _{\text {k}}\,\mathbf {K_i^{[0]}} + x_i\,\mathbf {K_i}\Vert _2\) for all elements. Thus, if \(x_i=1\),

$$\begin{aligned} {\Vert \mathbf{\bar {K}}\Vert _2 \ge \Vert \mathbf {K_i^{[0]}}\Vert _2 = \frac{1}{1-\varepsilon _{\text {k}}}\,\Vert \mathbf {K_i}\Vert _2 > \Vert \mathbf {K_i}\Vert _2} \end{aligned}$$

(147)

and, if \(x_i=0\),

$$\begin{aligned} {\Vert \mathbf{\bar {K}}\Vert _2 \ge \varepsilon _{\text {k}}\,\Vert \mathbf {K_i^{[0]}}\Vert _2 = \frac{\varepsilon _{\text {k}}}{1-\varepsilon _{\text {k}}}\,\Vert \mathbf {K_i}\Vert _2 > \varepsilon _{\text {k}}\,\Vert \mathbf {K_i}\Vert _2 .} \end{aligned}$$

(148)

To obtain a higher lower bound for \(\Vert \mathbf{\bar {K}}\Vert _2\) with respect to \(\Vert \mathbf {K_i}\Vert _2\) when \(x_i = 0\), we consider topologies in which: for any void element of index \(i_\text {v}\), there is at least one solid element of index \(i_\text {s}\) such that \(\Vert \mathbf {K_{i_s}}\Vert _2 \ge \Vert \mathbf {K_{i_v}}\Vert _2\). Then, for any void element of index \(i_\text {v}\), the following bound is directly obtained from Eq. (147):

$$\begin{aligned} {\Vert \mathbf{\bar {K}}\Vert _2 > \Vert \mathbf {K_{i_s}}\Vert _2 \ge \Vert \mathbf {K_{i_v}}\Vert _2 .} \end{aligned}$$

(149)

Therefore, for topologies that satisfy this condition, \(\Vert \mathbf{\bar {K}}\Vert _2 > \Vert \mathbf {K_i}\Vert _2\) for all elements (not only for solid ones). Finally, by applying this on Eq. (146), the following upper bound is obtained for \(\Vert \mathbf{\bar {A}_{\mathbf i}}\Vert _2\):

$${\boxed {\Vert \mathbf{\bar {A_i}}\Vert _2 < \kappa (\mathbf{\bar {K}}) .}} $$

(150)

When a structured grid mesh with identical elements is considered, it is very reasonable to expect that the topologies will satisfy such condition. In this kind of mesh, the only distinction between elements that may alter the value of \(\Vert \mathbf {K_i}\Vert _2\) is the different mechanical restrictions that may be applied on their boundaries. To satisfy the considered condition, it is sufficient that there is at least one solid element in all distinct configurations (with respect to the applied mechanical restrictions).

For example, in the standard cantilever beam composed of rectangular elements, there are only two distinct configurations: elements that are next to the clamped boundary, and elements that are not. This means that, if there is at least one solid element next to the clamped boundary and at least one solid element away from it, the condition would be satisfied. Evidently, this will always be the case for structures that are not degenerated, since solid elements should connect the loads to the mechanical restrictions.

In more complex settings, it is possible to have topologies that are not degenerated but still fail to satisfy the condition. If many types of mechanical restrictions are present, some of them can be artificially removed from the design domain throughout the optimization process. However, this is not desirable to occur, since it would qualitatively change the optimization problem that was meant to be solved originally.

To handle this issue, designers may include procedures to prevent artificial removal of restrictions or, if it is appropriate, they may truly remove any restriction that becomes disconnected from the structure. Thus, except in very particular cases, the considered condition should always be satisfied for the topologies that appear in the optimization process.

Source: https://link.springer.com/article/10.1007/s00158-021-03066-z

0 Response to "Finite Complement Topology FXx2 Continuous to Discrete Topology"

Postar um comentário